Kaodi

Legend

Famously one of the biggest proponents for UBI in Canada was actually a conservative, Hugh Segal.UBI terrible idea. Magic money beans for online chatter.

We need a safety net but yeah can't say to much more.

Famously one of the biggest proponents for UBI in Canada was actually a conservative, Hugh Segal.UBI terrible idea. Magic money beans for online chatter.

We need a safety net but yeah can't say to much more.

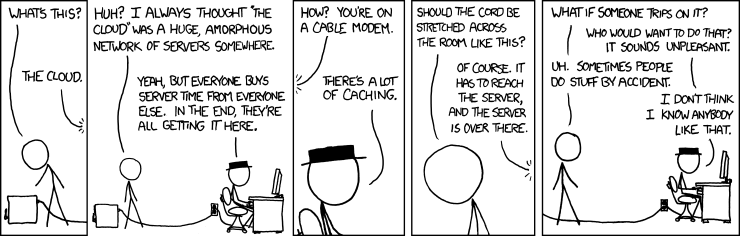

Let’s be clear here that there are two reasons why this is being said. One, is that they are focusing on results and not documenting each evolution of the system in order to get a working product out the door as fast as possible; and two, revealing the data the model was trained on and how it works would likely open them up to many lawsuits since the data they used was not owned by them.What makes them dangerous, is that we don't know how they are doing what they are doing,

Sure. I didn't say it could be put back into the bag. I just agree that I was one who if he had his way, would have the tech buried so deeply that it would never see the light of day again.Never would work, someone always opens the box. Better have the box out there in the open and develop the social and practical defences as needed.

No, this is not just a lack of documentation. The model layers have become so deep, that the data scientists developing them can no longer explain how the program is able to solve the problem in the way that it does. Hence, emergent behavior in these LLMs. An example of this is that GPT-4 was not trained to learn how to perform arithmetic, but it can do it anyway.Let’s be clear here that there are two reasons why this is being said. One, is that they are focusing on results and not documenting each evolution of the system in order to get a working product out the door as fast as possible; and two, revealing the data the model was trained on and how it works would likely open them up to many lawsuits since the data they used was not owned by them.

To be more accurate from my earlier post, some companies do purposefully obfuscate how their models work. But from what I have been reading, once you get a million feature parameters (ChatGPT was over 100 million, and new LLM's are over a billion), basically the scientists can't explain how they work anymore. In some cases it's even less than a million. Unfortunately, there is a term "black box" that conflates two different concepts (and they need not be mutually exclusive):

- Purposeful obfuscation for proprietary reasons (or to hide biased data sets)

- Scientists can't explain how the model can do what it does (eg, see emergent behavior)

Scientists Increasingly Can’t Explain How AI Works

AI researchers are warning developers to focus more on how and why a system produces certain results than the fact that the system can accurately and rapidly produce them.www.vice.com

Why We Need to See Inside AI's Black Box

A computer scientist explains what it means when the inner workings of AIs are hiddenwww.scientificamerican.com

Again, this is not true in all cases. In some cases, yes, for proprietary reasons, companies don't divulge either the data used, initial parameters, and/or the model architecture. But for other cases, we simply don't know how it works, only that it does through experimentation. It's not just that data scientists won't tell you how their architecture, it's that they are unable to tell you (even if they wanted to). This is all the more true once you start getting into the big leagues, with LLM's having millions of feature parameters trained on petabytes of data.They could know how they work, but they chose not to document and regression test after each new data point was included. It was their choice to do it this way so that they could get it done quicker. It would take a tremendous amount of time and they are more interested in beating their competitors to the market than the advancement of human knowledge

Sorry, but that's just not going to happen...at least until we get quantum computers, then probably. It's also questionable why we would need or want to "recreate" a human brain (it would be an imperfect model of our own brain, and may not be necessary for true AGI).We do not have the tools to build a functioning brain a neuron at a time, but we do have that ability with these algorithms. We could be discovering how the evolution of consciousness functions by watching a brain be built one neuron at a time, but they would rather try to make fat stacks of cash instead.

Unlikely. Anything that runs on microprocessors will find a solution to us that doesn't involve bathing the world in EMPs.Wat me worry.

People don't know how much compute power it takes to train these models. Everyone thinks that Cloud Compute is infinite, but it isnt [link to a pdf].

(Dungeons & Dragons)

Rulebook featuring "high magic" options, including a host of new spells.