You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

D&D General DALL·E 3 does amazing D&D art

- Thread starter M.T. Black

- Start date

RoughCoronet0

Dragon Lover

Saracenus

Always In School Gamer

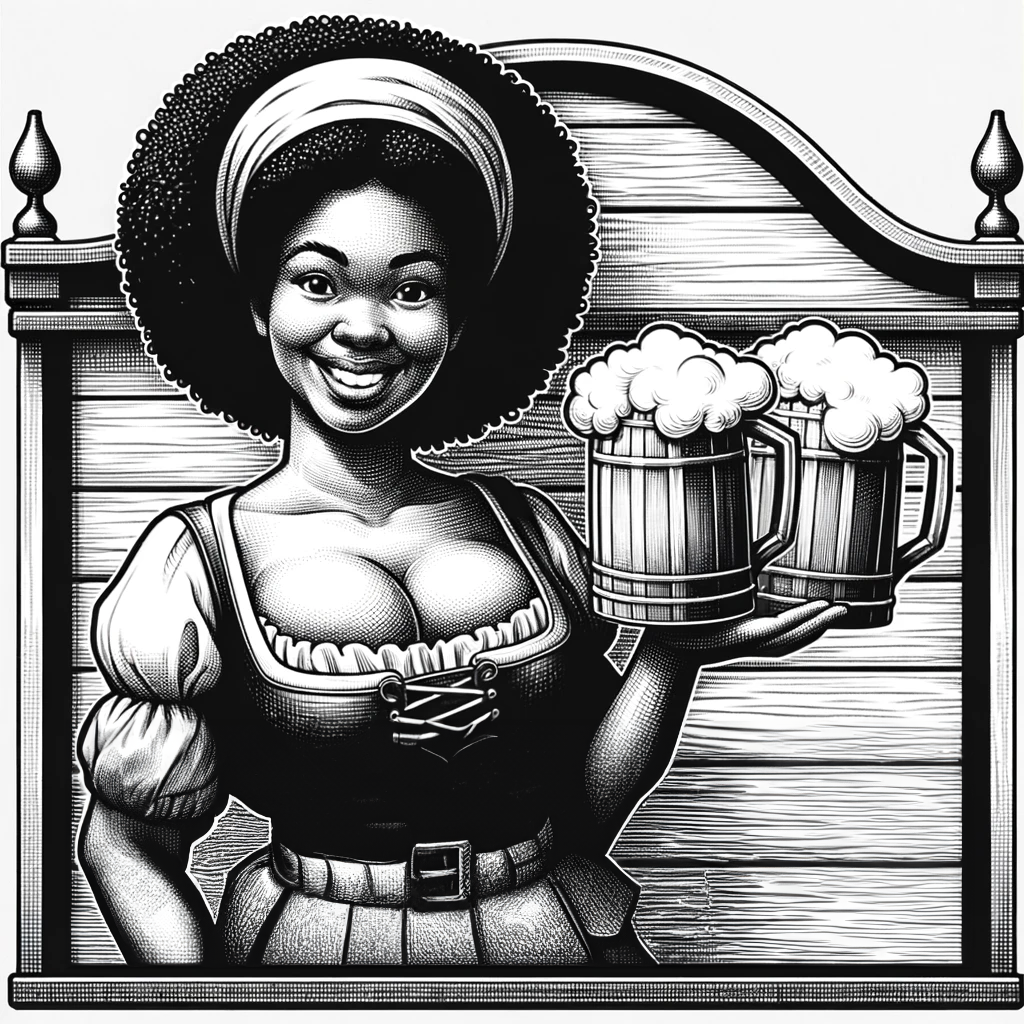

Here is something more inline with how the sign is described in T1 The Village of Hommlet...

Large walled building with a square wooden sign showing a buxom and smiling girl holding a flagon of beer: This is the Inn of the Welcome Wench, a place renowned for its good food and excellent drink.

This feels pertinent: https://spectrum.ieee.org/midjourney-copyright

My takeaway: The copyright infringement does not expose the vendor of the model but me as a user.

My takeaway: The copyright infringement does not expose the vendor of the model but me as a user.

G

Guest 7037866

Guest

The larger issue the AI doesn't have infinite images/ material to work from. It uses what is out there. Unfortunately, a lot of that is from copyrighted material that companies / people post online (often without indicating it is copyrighted material).This feels pertinent: https://spectrum.ieee.org/midjourney-copyright

My takeaway: The copyright infringement does not expose the vendor of the model but me as a user.

I've never, not once, generated anything that is even remotely like what is online and copyrighted. Largely this is because I use very long, specific prompts, which avoid AI just manipulating something that is copyrighted.

While the article was certainly a good one, many of the prompts are SO generic that the AI uses what it has the most samples of, which is typically copyrighted material.

So, it doesn't surprise me the onus will fall on the user. The company can certainly do what it can, but it offers a service and what anyone chooses to do with that service in really on them, particularly on anything they then use commercially.

Crimson Longinus

Legend

That is unreasonable, given than the AI can reproduce copyrighted material even when the user didn't ask it to. The user cannot reasonably be aware of every piece of copyrighted content in existence so that they could recognise when the AI has done so.So, it doesn't surprise me the onus will fall on the user. The company can certainly do what it can, but it offers a service and what anyone chooses to do with that service in really on them, particularly on anything they then use commercially.

G

Guest 7037866

Guest

It is not "unreasonable". While I agree that no user can be aware of every bit of copyrighted material, the article uses generalized enough prompts that such a user should (in all likelihood) realise the prompt result infringes on copyrights.That is unreasonable, given than the AI can reproduce copyrighted material even when the user didn't ask it to. The user cannot reasonably be aware of every piece of copyrighted content in existence so that they could recognise when the AI has done so.

Now, barring that, it is simple enough for a user who has (mistakenly) used an AI image with infringes to remove it when it is brought to their attention. If monetary gain has already occurred, that user assumes the responsibility of paying any penalties, etc. that might be levied.

I'm sure AI models will have more and more safe guards against this sort of thing in the future, but for the present if a user agrees to use a potentially flawed system, they are accepting the responsibilities and pitfalls of using that system.

Think of it like this. If a company releases a beta test OS (which likely still could have flaws) and you agree to install it knowing those risks, the responsibility is now yours.

In that light, I do believe all AI models should have a "this system could create material which is copyright infringement" clause.

all I want is a magic pillar lying on its side on an open train car....why, oh why, can it not do that? It does not understand the idea of an open train car at all.....

You could try adding a sentence describing what an open train car. I don't know, for example, and I'd like to try to generate something along the line you propose, but I am stuck at understanding. It's a car with a side door open?

Similar Threads

- Replies

- 101

- Views

- 23K

D&D General

D&D Creator Summit--VTT & One D&D

- Replies

- 588

- Views

- 109K

- Replies

- 1K

- Views

- 110K

- Replies

- 189

- Views

- 40K

Spelljammer

Monsters of Spelljammer (part 3)

- Replies

- 20

- Views

- 20K